Why the Next Frontier in AI Is Not Intelligence, but Reliability

- The Productivity Paradox

- The Invisible Workforce

- The Prototype Illusion

- The Cognitive Overload Problem

- Learning From Engineering

- Cognitive Gates

- The Real Frontier

AI has made starting work almost free.

But it has quietly made supervising that work far harder.

Artificial intelligence has dramatically lowered the cost of starting cognitive work:

Write a document.

Generate a dataset analysis.

Prototype a system.

Spin up an agent.

Tasks that once took days now take minutes or hours.

But something else has changed quietly alongside this acceleration.

The amount of work we produce has expanded far faster than our ability to supervise it.

And that creates a new kind of risk.

The Productivity Paradox

Automation was supposed to give us time back.

Historically, however, technology rarely reduces work. It amplifies it.

Email made communication cheaper.

So we sent exponentially more emails.

Spreadsheets made analysis easier.

So we ran far more models.

AI appears to be following the same pattern.

It lowers the cost of cognitive work, which leads us to initiate far more of it.

The result is something many people are beginning to feel:

More productive than ever.

Also more cognitively exhausted than ever.

AI increases the density of work, not the amount of free time.

The Invisible Workforce

Using AI today often resembles managing a large team.

Except the discipline of supervising that work has not fully caught up yet.

Across organizations we now generate:

- code

- analyses

- reports

- prototypes

- strategic summaries

- documentation for systems that did not exist an hour earlier

Often dozens of outputs per day.

Yet we do not consistently ask the same questions that engineering managers ask their teams:

- What exactly was produced?

- What assumptions does it rely on?

- Has it been validated?

- Is it reliable?

Without realizing it, many organizations are now operating with an invisible workforce: thousands of AI-generated outputs shaping analyses, systems, and decisions.

And like any workforce, it requires governance.

The Prototype Illusion

AI is extremely good at producing convincing prototypes.

Demos appear quickly.

Systems appear functional.

Agents appear intelligent, sometimes convincingly so.

But many of these systems are not actually robust systems.

They are fragments of systems.

What looks like an MVP may lack:

- structured testing

- semantic validation

- edge-case robustness

- traceability of reasoning

The result is a growing number of systems that appear impressive while carrying hidden fragility.

Earlier generations of generative media (AI-slop) often failed in visible ways.

Distorted images.

Strange artifacts.

Unnatural motion.

Reasoning systems fail differently.

Their errors are semantic rather than visual.

And humans are not particularly good at spotting them.

This limitation reminds me of the seminal 1974 Science paper examining the illusion of explanatory depth: our tendency to believe we understand complex systems far better than we actually do.

AI amplifies that illusion.

The challenge, therefore, is not simply generating intelligent outputs. It is ensuring that the systems producing them remain reliable as complexity increases.

The Cognitive Overload Problem

We are entering an era of unprecedented cognitive throughput.

AI now allows us to generate:

- more analyses

- more code

- more hypotheses

- entire experimental pipelines

- strategy documents and reports at scale

But our ability to verify and supervise those outputs has not increased proportionally.

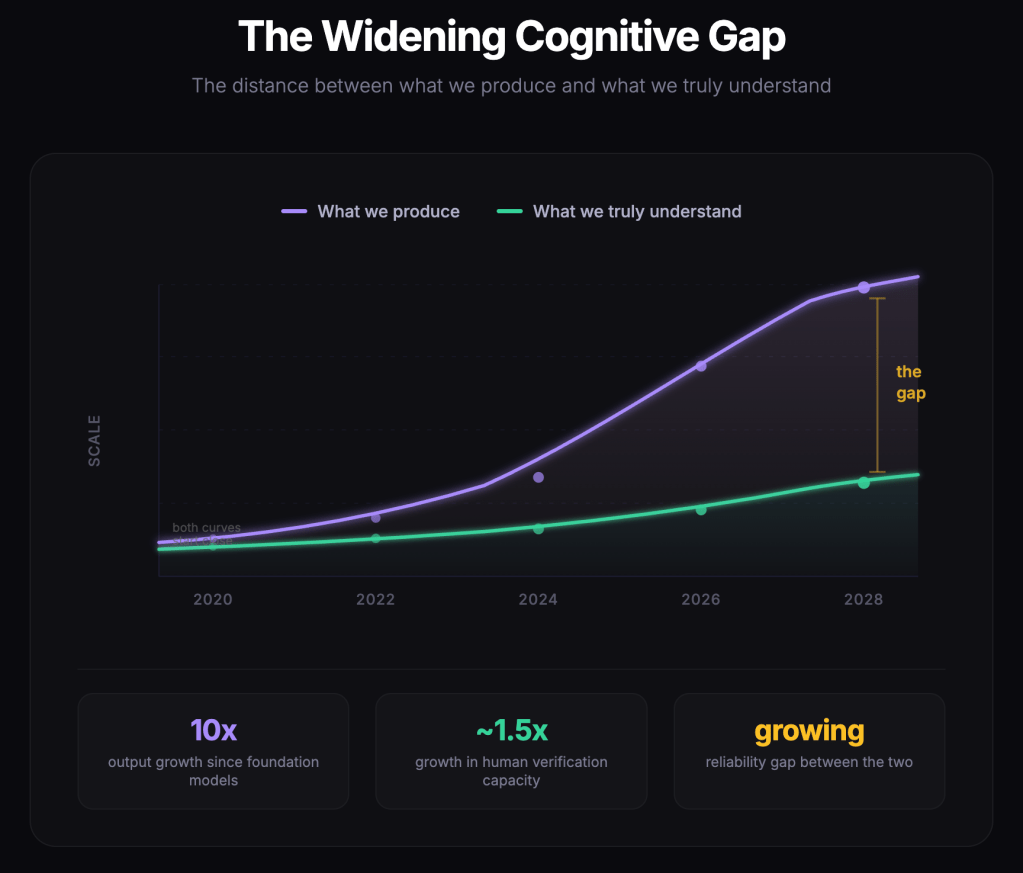

This creates a widening gap between what we produce and what we truly understand.

Unchecked complexity has always been dangerous in engineering systems.

AI simply moves that complexity into the cognitive domain.

Figure 1. The Widening Cognitive Gap. As the cost of cognitive work approaches zero, the amount of work we produce increases far faster than our ability to verify it. The resulting reliability gap between output and understanding continues to widen.

Learning From Engineering

Other engineering disciplines have encountered similar problems.

When systems become too complex for individuals to supervise directly, engineers introduce abstractions that guarantee reliability.

Electric power systems are extraordinarily complex.

Yet most users interact with electricity through a simple abstraction: a plug.

We do not trace individual electrons 😄

Instead, engineers built layers of infrastructure that guarantee reliability without requiring users to understand the entire system.

AI systems will likely require similar abstractions.

Cognitive Gates

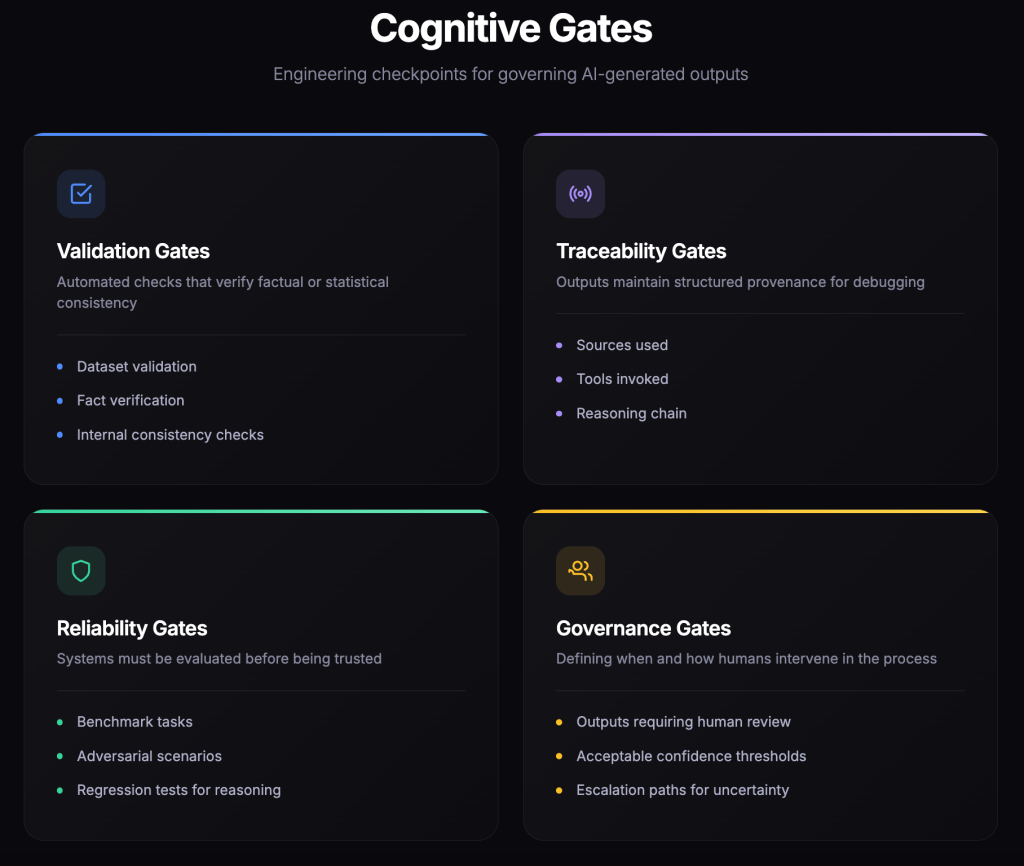

One idea I have been exploring recently is what I call cognitive gates.

The intuition is simple.

Instead of attempting to manually supervise every AI-generated output, we establish structured and rigid checkpoints that ensure semantic reliability before outputs propagate further.

These checkpoints might verify things such as:

- factual or statistical consistency

- traceability of reasoning

- benchmark performance

- governance thresholds for human oversight

The goal is not to slow down AI-assisted work.

It is to build engineering abstractions that restore confidence in the outputs we rely on.

Reliable AI systems require multiple layers of evaluation:

- automated validation checks

- adversarial testing of reasoning

- benchmark tasks and regression tests

- structured human review loops

AI can help test AI.

But it cannot be the entire solution.

At some point a human must remain in the loop: not necessarily to inspect every output, but to evaluate the system itself and challenge its assumptions.

In other words, our end users should not be our alpha or beta testers.

Figure 2. Cognitive Gates. Engineering checkpoints that validate AI-generated outputs through automated validation, traceability of reasoning, reliability testing, and governance mechanisms for human oversight.

The Real Frontier

Much of the current discussion around AI focuses on model intelligence.

But the next wave of progress may come from something else entirely.

Not making models dramatically smarter.

But building the infrastructure required to trust the work they produce.

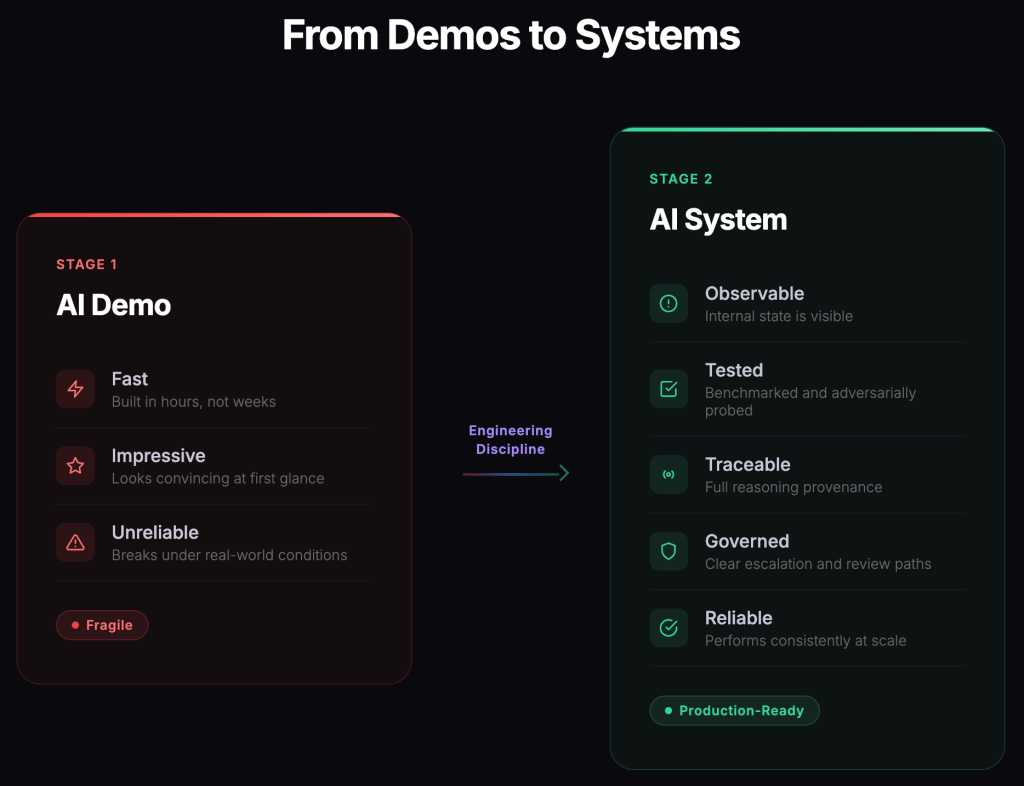

Today many AI systems exist primarily as demonstrations: impressive at first glance, but fragile under real-world conditions.

Transforming those demonstrations into robust systems requires engineering discipline.

Figure 3. From Demos to Systems. Reliable AI systems require engineering discipline: observability, testing, traceability, governance, and operational robustness.

The organizations that succeed in the coming decade will not simply deploy the most AI.

- They will learn how to govern cognitive scale.

- They will build systems where productivity can grow without reliability collapsing.

The real bottleneck is no longer generating ideas.

It is knowing when to trust them.

Solving that problem may require more than better prompts or larger models.

It may require an entirely new layer of infrastructure.

A form of cognitive engineering: the discipline of building systems that allow humans and AI to reason, analyze, and operate together at scale without losing reliability.

If that infrastructure emerges, today’s AI prototypes may eventually look less like the final destination…and more like the early electrical experiments that preceded the modern power grid 🙂

Leave a comment